Meta’s Avocado AI Model is Delayed. Good.

Meta’s $135 Billion Bet and Our AI Expectations

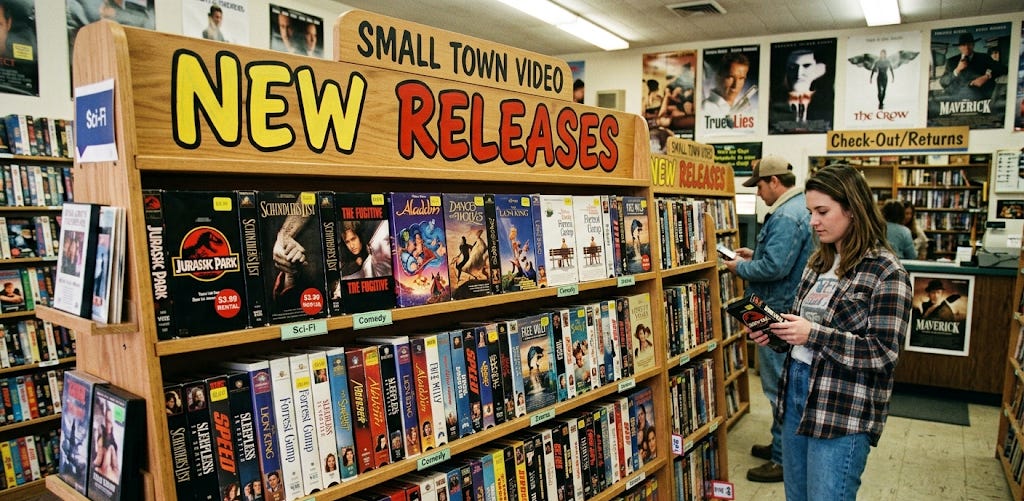

My hometown did not have a Blockbuster video. For many years, there was a small, local video rental shop between a laundromat and a hoagie shop. A few years later, another local establishment took its place in the newly built strip mall that housed the new Wal Mart. Renting a movie felt like a big deal. It was not a common occurrence in our house, so when it did happen, it was something. I oftentimes felt like I needed to watch the rented movie AT LEAST twice before it was returned because when would this opportunity come again?

I felt that way as a child because I’m old enough to remember a time when everything wasn’t available all the time. There was a time that once a movie left the theater, it could be months or even a year before it was available on video (a lifetime to someone my age at the time). Maybe it came out on HBO, if you were lucky enough to have a subscription. That’s why the New Releases rack in your local video rental store felt like a big deal. You could usually find one of the 1-3 copies of Weekend at Bernie’s, or Rambo First Blood Part II but that new movie that was recently in the theater? Good luck. Wait your turn.

Today, we are so accustomed to a river of content that never ends that there are entire business models built around it. I’ll admit that if there’s a series I want to watch on a streaming service and the episodes come out one per week, I won’t start it until all the episodes are available.

Wait a week for a new episode? What is this? The Soviet Union?

So insatiable is our appetite for new content that it transcends our feeds and scrolling. In the AI era, we also expect a constant churn of new AI models for us to play with, experiment on, and comment on (thereby generating more content). We’ve gotten used to model releases on a certain schedule. Not every day or every week, but the AI industry and its many watchers create a demand around model releases. Those releases generate media attention and make investors happy.

Earlier this month, AI company Meta announced that its next model, internally codenamed “Avocado,” was delayed. Not helping Meta’s case was that OpenAI released GPT 5.4 on March 5th and Anthropic released Claude Opus 4.6 on February 5th.

That’s more like it, right?

One new model a month. Feels good.

While many reports pointed to Meta’s $115-$135 billion investment in AI infrastructure and its “clear” lag behind the major frontier models, there’s a better question to ask.

Do we want frontier models to be developed at this pace?

This is not an argument for an AI moratorium nor for slowing innovation but instead to accept that models of this size and capability should be tested, tested, and tested again. Instead of criticizing a company for its “slow” rollout of a model, perhaps we should adjust our expectations.

Maybe we need to wait a little longer for our favorite movie to hit that New Releases rack, but in the case of AI, it’s important that the new release is safe to use. If Meta deserves any criticism, it is that it did not say it was holding up release for safety testing, but for performance concerns. Building in safety, even if it takes longer, can be the industry’s key to unlocking multiple individuals and industries that do not trust AI precisely because of this output schedule. This can change, but not if we point and laugh every time a model does not come out when we want it to.

The Right Level of Ripeness

I love avocados and I definitely needed to learn when one was ripe. It’s not something you can really verbally tell someone.

“Squeeze it. If it’s soft, but not too soft, it is ready. Just make sure you can kind of squish it with your fingers but not so much that’s squishy.”

There’s no way to do it unless you’ve felt multiple avocados and been burned by a few that were over or under ripe.

AI is not so different, and I must commend Meta for an internal codename that lends itself to excellent cover art and analogies. How do we know when AI is “ready?” What does ready mean?

AI is a non-linear, non-deterministic, and stochastic system that we are constantly improving upon and figuring out new ways to break. Unlike traditional linear cybersecurity, there’s no good way to know whether an AI product, certainly not a frontier model, is “ready.” The reporting on the Avocado delay indicates that Meta held the release because it was not performing well enough against performance benchmarks. Performance of the system is certainly a parameter for understanding when AI is ready to release to the public, but it is far from the only one.

Much like a squishy, but not TOO squishy avocado, AI systems require a sweet spot of training, performance testing, and safety testing. Meta is clearly focused on the performance side as it has seen Claude Opus 4.6, Claude Code, OpenAI Codex, and Gemini 3 surpass the capabilities it promised. Media outlets jumped all over this opportunity by pointing to Meta’s $135 billion AI infrastructure bill and the millions it paid AI/ML engineers as a sort of “gotcha” moment.

Media scrutiny on a public company spending hundreds of billions of dollars is not unreasonable, but the reasons why could use a change in perspective. In a time when everything is available all the time, our demand for newness is not confined to content. It is starting to seep into our demand for new technologies, which may be done at our peril.

Other Fruits

Rumor has it that the next model behind Avocado at Meta is also fruit themed: Grapefruit. But the Meta lesson is not just about Avocado or Grapefruit or whatever AI model is next. It’s about the relationship between our demand and the development of technology.

Walking into a video store in the early 1990s was an exercise in availability. Even the biggest new releases had limited copies, so you had to do something nearly unthinkable in 2026, wait. In AI, we clamor so loudly for a new model that will blow our socks off for one work week before that model becomes so passe that we can hardly wait until the next one as if it had been out of the theater for the last year. That demand in a market-driven economy will naturally mean that manufacturers will seek to meet it. The pace of that demand, however, is so intense that manufacturers will need to shorten their timelines to meet it. Shortening of timelines in manufacturing means skipping something. We aren’t talking about an assembly line for Oldsmobiles anymore, but we are talking about millions of lines of code and some of the most powerful information engines in human history. Do we want things to be skipped?

Safety testing in AI broadly is the subject of its own article. That Meta is holding up its model release over performance concerns is not itself surprising, but it could have made far more market impact had it said it was holding it up to complete safety testing. Safety in AI is not red tape; it’s risk mitigation and an enabler of innovation. The strict focus on performance benchmarks misses two of the biggest challenges that AI is having…trust and confidence. Delaying a model release is not inherently a problem if the company is doing it for the right reasons.

Beyond AI, other emerging technologies are beginning to suffer from the same problem. In quantum computing, decades of research funded by private companies is starting to feel the pressure to provide some returns. Releases from Google, AWS, and Microsoft in late 2024 and 2025 made big claims and stocks of quantum companies went up across the board. But given the power of quantum computing, do we want those technologies to rush to market?

It is naturally human, and particularly American, to want new things quickly. The speed of information in 2026 highlights the pace of demand. While we are in an era of ultra-fast information, we are also in an era of extraordinary technology development. Unlike other technological eras, what we are building is not limited to a state or a military. Initially, nuclear technology was limited to military use. Today, it is still not broadly available to the public. AI is. Quantum computing likely will be.

Our drive to grow platform users is driving a commercialization path that follows the rise of social media. The more people on the platform, the better. But just like social media, AI is missing the safety testing that matches the breadth of its reach. It is doing so because our demand for the newest model is so extreme that once we’ve had about 5-7 days to play with the latest release, we already want a new one. There’s no reason to believe that quantum computing will be different, if commercialized the same way.

I’ll admit that I don’t want to wait a week for a new episode of a TV show even though I did wait for more of my life than I didn’t. I also respect the desire to play with the newest toy (and write about it). But we all need to ask ourselves what we want. Do we want an unending funnel of new toys no matter the cost to bring them to us? Or do we want technology that is designed for humans? Technology that builds in safeguards, audits its outputs, and prioritizes the safety and values that we espouse. Meta held Avocado because of performance and the media went wild. Holding back a product until it is ready for public use is not a bad thing. If those reasons start to shift toward safety, we will really be getting somewhere.